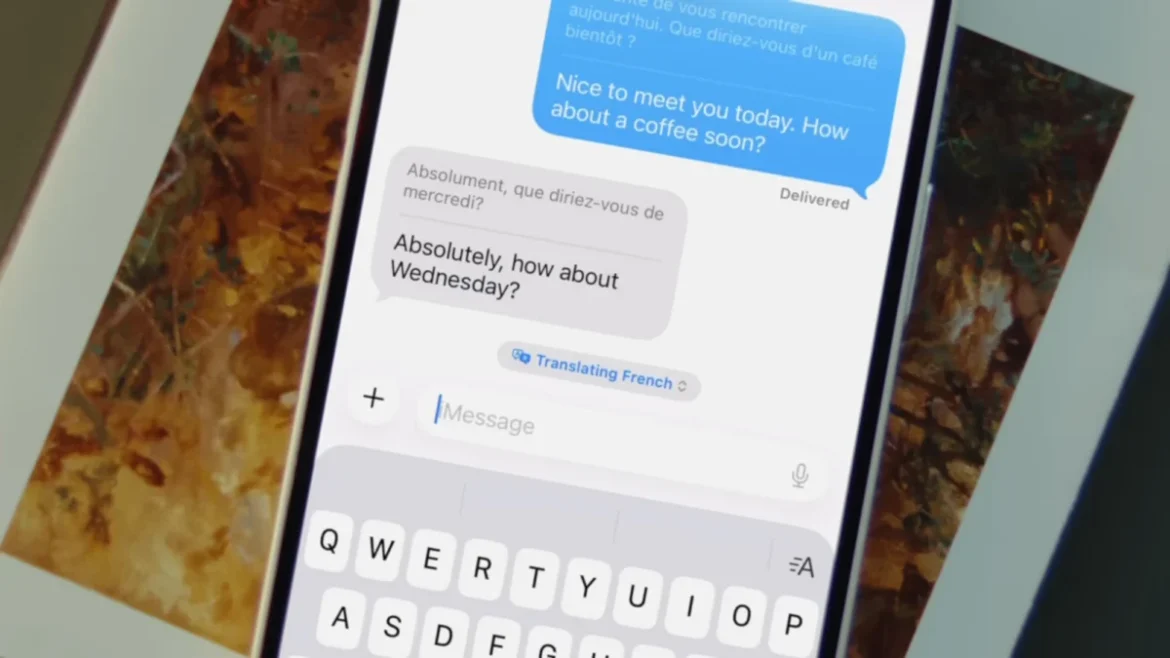

Apple has officially integrated advanced real-time translation capabilities directly into its native Messages application, leveraging the computational power of Apple Intelligence to bridge communication gaps between users speaking different languages. This feature, which represents a significant leap from the standalone Translate app introduced in earlier versions of iOS, allows for a seamless, bidirectional exchange of translated text within a single chat thread. By utilizing on-device machine learning, the system can automatically convert incoming foreign language texts into the user’s primary language while simultaneously offering to translate outgoing messages for the recipient.

The introduction of this feature marks a pivotal moment in Apple’s broader strategy to embed artificial intelligence into the core user experience of its hardware. Unlike previous translation tools that required users to copy and paste text into a separate application, the new integration in Messages functions as a persistent layer of the interface. This shift is designed to facilitate more natural conversations, particularly for international business travelers, expatriates, and language learners who require immediate linguistic assistance without interrupting the flow of digital interaction.

The Evolution of Apple Intelligence and Translation Services

The journey toward real-time, integrated translation began several years ago, but it has reached its zenith with the rollout of Apple Intelligence. To understand the current landscape, it is necessary to look at the chronology of Apple’s linguistic software development. In 2020, with the release of iOS 14, Apple introduced the standalone Translate app, which provided a foundational tool for text and voice conversion. Over the subsequent years, Apple expanded these capabilities into Safari, allowing for full-webpage translations, and later introduced "Live Text," which enabled the translation of text found within photos and the camera’s viewfinder.

In June 2024, during the Worldwide Developers Conference (WWDC), Apple unveiled Apple Intelligence, a personal intelligence system that combines generative models with personal context. This system requires significant hardware resources, specifically the A17 Pro chip or later, and a minimum of 8GB of RAM. Consequently, the real-time translation features in Messages are currently limited to the iPhone 15 Pro, iPhone 15 Pro Max, and the entire iPhone 16 lineup, as well as M-series iPads and Macs. This hardware-software synergy ensures that complex linguistic processing occurs locally on the device, maintaining Apple’s rigorous standards for user privacy.

Technical Implementation and User Configuration

To activate real-time translation within a conversation, users must first ensure that Apple Intelligence is enabled on their compatible device. This is managed through the "Apple Intelligence & Siri" menu in the iOS Settings app. Once the system is active, the process for enabling translations within the Messages app is straightforward but must be configured on a per-contact basis, allowing users to customize their experience depending on who they are communicating with.

Within a specific message thread, the user taps the contact’s name at the top of the interface to access the conversation settings. A new toggle labeled "Automatically Translate" appears in this menu. Upon enabling this switch, the user is prompted to select the target language. Currently, the system supports 20 major languages, including English, Spanish, French, German, Mandarin Chinese, Japanese, and Korean, with more expected in future software updates.

Once configured, the Messages interface adapts to the selected language. Every message sent by the user in their native tongue will display a secondary, translated bubble immediately beneath the original text. Conversely, any message received in the foreign language will be accompanied by an English translation (or whichever language is set as the device default). This dual-display method is particularly effective for language acquisition, as it allows users to see the syntax and vocabulary of both languages simultaneously.

Supporting Data and Market Context

The move to integrate AI-driven translation into mobile messaging comes at a time when the global language services market is experiencing unprecedented growth. According to industry reports, the market for machine translation is expected to reach a valuation of over $3 billion by 2027, driven largely by the integration of neural machine translation (NMT) into consumer electronics.

Apple’s implementation is a direct response to similar features found in the Android ecosystem. Google’s "Live Translate" feature, available on Pixel devices, has long been a selling point for the search giant’s hardware. However, Apple’s approach differs by emphasizing its "Private Cloud Compute" and on-device processing. By keeping the translation data on the hardware, Apple addresses a significant concern for corporate users and privacy-conscious individuals who may be hesitant to send sensitive conversational data to a cloud server for processing.

Data from mobile usage surveys suggests that over 60% of smartphone users regularly interact with individuals who speak a different primary language. For these users, the friction of switching between apps to translate a sentence is a primary deterrent to frequent communication. By removing this friction, Apple is not just providing a utility; it is potentially increasing the total volume of international digital communication.

Expansion Across the Ecosystem: AirPods, FaceTime, and Phone Calls

The translation capabilities of Apple Intelligence extend far beyond the Messages app. For users who own compatible peripherals, such as the AirPods Pro 2 or the latest AirPods 4 with Active Noise Cancellation, the system offers "Live Translation" for spoken dialogue. By tapping a "Live" button within the Translate app, users can hear a real-time audio translation of a foreign speaker directly in their ears. This feature effectively turns the AirPods into a real-time interpreter, a tool that was once the domain of science fiction.

Furthermore, Apple has integrated these tools into its primary communication channels:

- FaceTime: Users can now enable Live Captions during video calls. The system transcribes the speaker’s words and translates them into the viewer’s language in real-time, displaying the text as subtitles on the screen.

- Phone App: During standard cellular or Wi-Fi calls, a "Live Translation" option is available via the call controls. This allows for a two-way translated conversation where the AI provides voice and text interpretations of the call as it happens.

- Camera and Visual Look Up: The system can now translate text on signs, menus, and documents instantly through the camera app, with the translated text overlaid on the original image using augmented reality (AR) techniques.

Privacy Implications and On-Device Security

One of the most critical aspects of Apple’s translation rollout is the commitment to on-device processing. In an era where AI models often rely on massive data centers, Apple’s Neural Engine (ANE) handles the heavy lifting of linguistic analysis locally. This ensures that the contents of a private message or a sensitive business call never leave the device.

For languages that require more complex processing, Apple utilizes its "Private Cloud Compute" (PCC). PCC is a cloud intelligence system designed specifically for private AI processing. When a task is too large for the iPhone’s local chip, the device sends only the necessary data to a server running on Apple Silicon. Critically, this data is not stored, and Apple has no technical means of accessing it, a claim that has been verified by independent third-party security researchers.

Broader Impact and Future Implications

The long-term implications of integrated AI translation are profound. In the professional world, this technology reduces the barriers to entry for small businesses looking to expand into foreign markets. A merchant in Tokyo can now communicate seamlessly with a customer in New York via iMessage without the need for a human translator or a third-party service.

In the realm of education, these tools serve as a continuous, passive learning environment. By seeing translations in real-time during casual conversations, users are exposed to colloquialisms and natural sentence structures that are often missing from formal language-learning software.

As Apple continues to refine its Apple Intelligence models, the accuracy of these translations is expected to improve. The current system already accounts for regional dialects and formal versus informal speech patterns, but future updates will likely incorporate even more nuanced cultural context. For now, the ability to turn an iPhone into a universal translator within the Messages app represents a significant milestone in the democratization of AI, moving it from a novelty to a fundamental tool for global human connection.